MoSeq

Type: Software,

Keywords: Behavioral quantification, Neuroethology, Unsupervised clustering, Machine learning, Computer vision, Probabilistic graphical models, Bayesian nonparametrics, Mice

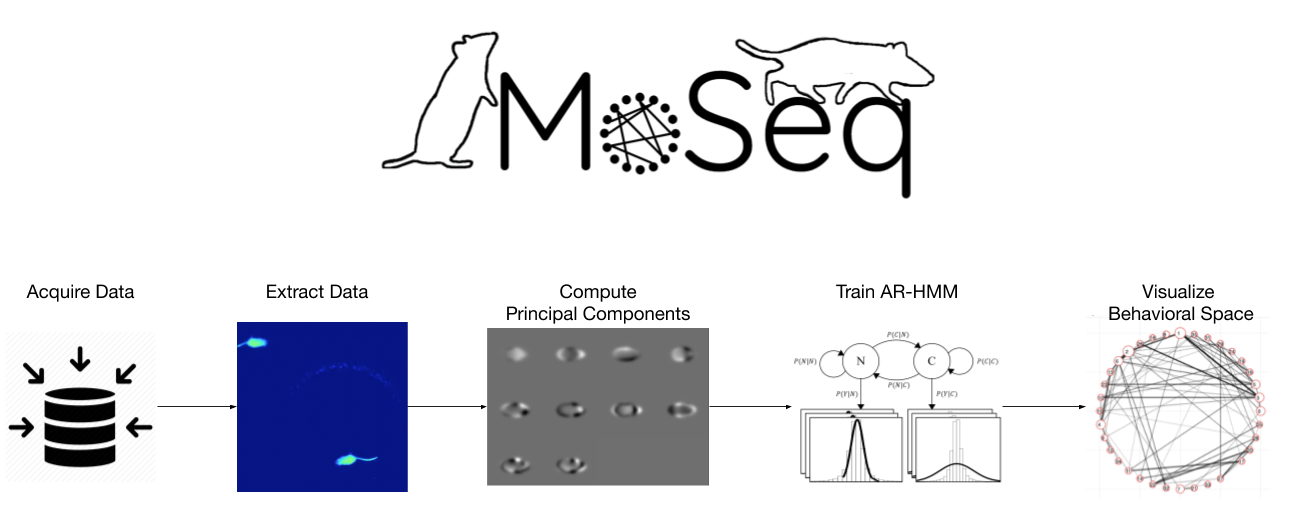

An interactive pipeline and toolkit for unsupervised discovery of repeated behavior motifs in 3D videos or keypoints from 2D/3D videos of freely behaving mice

MoSeq (or Motion Sequencing) provides a pipeline for quantifying 3D videos or keypoints from 2D/3D videos of freely behaving mice and discovering the underlying structure of mouse behavior. MoSeq automatically locates, tracks, and quantifies the mouse in each frame of the video. Unlike typical supervised behavioral classifiers that then require human labeling, the pipeline instead trains an unsupervised machine learning model to identify repeated motifs (or syllables) of behavior. The pipeline then offers a suite of visualization tools and statistical tests for understanding the discovered behaviors and comparing them across experimental conditions. MoSeq dramatically reduces human labor in exploring mouse behavior, discovers previously unknown behaviors, and allows neuroscientists to more completely relate neural activity to free behavior.

* Tool for unsupervised behavioral classification in freely behaving mice.

* Tracking and quantification of mouse posture using computer vision.

* Probabilistic graphical modeling and clustering to identified repeated motifs of behavior.

* Statistics and visualizations to understand how behavior progresses in mice.

* Tools for comparing and quantifying mice behavior across genetic and experimental differences.

* Massive screens of pharmaceutically and/or genetically-induced behavioral phenotypes.

* Mapping of the behavioral space associated with induced activity of various brain regions.

* Unsupervised analysis of behavioral task performance (e.g. mazes, bandit tasks, etc.).

* Unsupervised analysis of mouse behavioral changes in response to salient odors.

* Comparison of behavioral phenotypes between wild type and motor-disorder model mice.

* Precise behavioral quantification of neomorphic behaviors upon optogenetic manipulation.

* Mouse/mice

* Unlike most other behavioral classification tools, MoSeq requires no labeling of training data or specification of motifs of interest.

* MoSeq pipeline provides a robust toolkit for exploring, visualizing, and statistically validating discovered behaviors all in a convenient graphical interface.

* Although the modeling framework behind MoSeq can discover behavioral syllables in data of nearly any kind (e.g. mouse ultrasonic vocalizations), the key limitation of the current MoSeq pipeline is that it only accepts mouse depth video under very specific recording conditions. We aim to expand the flexibility and accessibility of our toolkit in future years.

* Depth video of a freely behaving mouse: The Datta Lab advises on and provides tutorials for collecting this data.

* Wiltschko, 2015, Mapping Sub-Second Structure in Mouse Behavior, Neuron, https://doi.org/10.1016/j.neuron.2015.11.031

* Markowitz, 2018, The Striatum Organizes 3D Behavior via Moment-to-Moment Action Selection, Cell, https://doi.org/10.1016/j.cell.2018.04.019

* Wiltschko, 2020, Revealing the structure of pharmacobehavioral space through motion sequencing, Nature Neuroscience, https://doi.org/10.1038/s41593-020-00706-3

* In progress (see www.dattalab.org for updates)

* In progress (see www.dattalab.org for updates)

Sandeep Robert Datta, Associate Professor

Harvard Medical School, Boston, MA

TEAM / COLLABORATOR(S)

Ayman Zeine, Computer Scientist, HMS; Akshay Jaggi, Computer Scientist, HMS; Winthrop Gillis, Graduate Student, HMS; David Brann, Graduate Student, HMS; Jeff Markowitz, Postdoctoral Scholar, HMS; Caleb Weinreb, Postdoctoral Scholar, HMS

FUNDING SOURCE(S)

NIH U24-NS109520-01